AWS Feed

Build regression models with Amazon Redshift ML

With the rapid growth of data, many organizations are finding it difficult to analyze their large datasets to gain insights. As businesses rely more and more on automation algorithms, machine learning (ML) has become a necessity to stay ahead of the competition.

Amazon Redshift, a fast, fully managed, widely used cloud data warehouse, natively integrates with Amazon SageMaker for ML. With Amazon Redshift ML, you can use simple SQL statements to create and train ML models from your data in Amazon Redshift and then use these models for a variety of use cases, such as classification of a binary or multiclass outcome or predicting a numeric value through regression. Amazon SageMaker Autopilot provides all the benefits of automatic model creation, but as an advanced user, you can also influence the model training by providing different parameters such as model type, objective, and so on.

Amazon Redshift ML allows you to address several ML challenges, such as the following:

- Binary classification – Predict a true/false outcome, such as whether a customer will churn. To explore this specific use case further, see Create, train, and deploy machine learning models in Amazon Redshift using SQL with Amazon Redshift ML.

- Multiclass classification – Identify the class of an input value within a discrete number of classes. For example, you can identify which will be the best-selling product.

- Regression – Predict a numerical outcome, like the price of a house or how many people will use a city’s bike rental service.

You can use Amazon Redshift ML to automate data preparation, pre-processing, and selection of problem type as depicted in this blog post. In this post, we assume that you have a good understanding of your data and what problem type you want to use for your use case. We demonstrate how to use Amazon Redshift ML to solve a regression problem predicting bike rental counts. We also provide some best practices for creating test data, validating your model, and using it for inference. We also show you how you can use the SageMaker console to troubleshoot the training process as an advanced user.

Prerequisites

As a prerequisite for implementing the example in this post, you need to set up an Amazon Redshift cluster with ML enabled on it. For the preliminary steps to get started, see Create, train, and deploy machine learning models in Amazon Redshift using SQL with Amazon Redshift ML.

Solution overview

In this post, we use Amazon Redshift ML to build a regression model that predicts the number of people that may use the city of Toronto’s bike sharing service at any given hour of a day. The model accounts for various aspects, including holidays and weather conditions. Because we need to predict a numerical outcome, we create a regression model.

We walk you through the following high-level steps:

- Input the raw data.

- Prepare the input data.

- Create the model.

- Validate the predictions.

Input the raw data

To prepare the raw data for this model, we populated three tables in Amazon Redshift using different public datasets.

| Table Name | Description | Attribution |

| Ridership | Bike Share Toronto Ridership Data for 2017 and 2018 | Contains information licensed under the Open Government Licence – Toronto |

| Weather | Government of Canada historical weather data for 2017 and 2018 | Based on Environment and Climate Change Canada data |

| Holiday | Historical holidays data by the University of Waterloo | Contains information provided by the University of Waterloo under license on an “as is” basis |

You can use the following script to create and load the data in these tables in Amazon Redshift, and use the Amazon Redshift Query Editor to run these SQL scripts:

To load the data, use the following COPY commands. Replace the AWS Identity and Access Management (IAM) role with the IAM role that you created as part of the prerequisite steps earlier.

Data preparation

Let’s discuss about how the data can be biased and how selecting the right distribution of data impacts accuracy. For most ML problems, data preparation is the most time-consuming process; it involves preparing the data, finding relevant attributes, and cleaning and curating it to be used as input to the ML model. Bias or anomalies in the input data distribution also play a key role in model accuracy, therefore it’s very important to curate that as much as possible. Let’s explore and prepare our input dataset.

Ridership

The ridership table contains Bike Share Toronto’s ridership information for 2017 and 2018. We performed the following data preparation steps to make it more meaningful for our ML model:

- Transform the records to all be in the local time zone. The data before July 2017 was provided in UTC, whereas all data after that was in Toronto’s local time zone, EST.

- Discard trips with a duration under 60 seconds.

- Discard trips with a duration longer than a day.

- Aggregate data to an hourly basis (rather than minute-level granularity) for better generalization.

- Break down the trip date column into

day,month,year,quarter,month_week, andweek_dayfor better correlation with the number of rides.

We carried out all these transformations using the following simple view:

Weather

The weather table contains Government of Canada historical weather data for 2017 and 2018 in UTC format. We performed the following data preparation activities:

- Transform the records from UTC to US/Eastern time zone.

- Aggregate the data to an hourly basis for better generalization.

- Because the majority of the attributes like

visibility_kmandwind_chillare empty in this dataset, we only usetemp_candprecip_amount_mmcolumns for our model.

We use the following view in Amazon Redshift to apply these transformations:

Trip data

In this step, we create a new table, trip_data, in Amazon Redshift to combine all relevant attributes from the input tables. We also create a random ordered attribute named serial_number to denote the serial number of each record:

The following is an example SELECT query:

The following table summarizes our output.

| Trip time | trip count | trip hour | trip day | trip month | trip year | trip quarter | trip month week | trip week day | temp c | precip amount mm | is holiday | serial number |

| 2017-07-04 23:00 | 105 | 23 | 4 | 7 | 17 | 3 | 1 | 3 | 20 | 0 | 0 | 1 |

| 2018-09-03 9:00 | 233 | 9 | 3 | 9 | 18 | 3 | 1 | 2 | 25 | 0 | 1 | 2 |

| 2017-09-29 20:00 | 185 | 20 | 29 | 9 | 17 | 3 | 5 | 6 | 12 | 0 | 0 | 3 |

| 2017-04-09 23:00 | 28 | 23 | 9 | 4 | 17 | 2 | 2 | 1 | 12 | 0 | 1 | 4 |

| 2017-02-01 16:00 | 175 | 16 | 1 | 2 | 17 | 1 | 1 | 4 | 2 | 0 | 0 | 5 |

We can create an ML model in Amazon Redshift to predict the trip_count column in the preceding table for any given trip time. But before that, we may want to view the relationship of different attributes of this table with our target attribute, trip_count. The Pearson correlation coefficient is a popular method to find how strong a relationship is between two attributes. It returns a value between -1 and 1, in which 1 indicates a strong positive relationship and -1 indicates a strong negative relationship. A result of zero indicates no relationship at all.

We use the stored procedure sp_correlation, available in the Amazon Redshift Utilities GitHub repo, to view the relationship between our target column trip_count and the other numeric attributes in our dataset. This stored procedure allows us to get correlation of an attribute with other attributes in a table in Amazon Redshift.

The following table summarizes our output.

| trip count | trip hour | trip day | trip month | trip year | trip quarter | trip month week | trip week day | temp c | precip amount mm | is holiday | serial number |

| 1 | 0.32 | 0.02 | 0.19 | 0.12 | 0.20 | 0.02 | 0.02 | 0.52 | -0.07 | -0.12 | 0.00 |

The output shows that temperature and hour of the day have a strong positive correlation with the number of bike rentals. Similarly, precipitation and holidays have a negative correlation with the number of bike rentals. But serial number data has zero correlation because it’s a random number and we can safely exclude such columns in our ML model.

Create a model in Amazon Redshift

Now that our data preparation steps are complete, we can use the create model statement in Amazon Redshift to create our ML regression model. But before that, we want to divide our data into two sets by splitting 80% of the dataset for training and 20% for validation, which a common practice in ML. The training data is input to the ML model to identify the best possible algorithm for the model. After the model is created, we use the validation data to validate the model accuracy. We create the tables training_data and validation_data using the 20% threshold for our random ordered column serial_number with the following code:

After that, we run the create model command in Amazon Redshift, which creates a regression model to predict the trip_count value for any input date and time:

We define the following parameters in our create model statement:

- Problem type – Because we’re predicting a numeric outcome, we provide the problem type, but it’s an optional parameter.

- Objective – We specified

MSE(mean square error) as our objective, which is a common metric for evaluation of regression problems. - S3 garbage collect – Setting this parameter to

OFFretains the intermediate dataset used to train the models by SageMaker, which may help us troubleshoot any issues. - Max runtime – This parameter denotes how long the model training can run. Specifying a larger value may help create a better tuned model. The default value for this parameter is 5,400 (90 minutes).

The preceding statement takes a few seconds to complete. It initiates an Autopilot process in the background to automatically build, train, and tune the best ML model for the input data. It then uses Amazon SageMaker Neo to deploy that model locally in the Amazon Redshift cluster as a user-defined function (UDF). You can use the SHOW MODEL command in Amazon Redshift to track the progress of your model creation, which should be in the READY state within the max_runtime parameter you defined while creating the model.

Validate predictions with mean square error

In this step, we evaluate the accuracy of our ML model against our validation data.

For regression problems, typical accuracy metrics are mean square error (MSE) and root mean square error (RMSE), which measure the distance between the predicted numeric target and the actual numeric answer, also known as ground truth. We square the differences between the actual and predicted values and then find the average to calculate the MSE. The square root of MSE computes the RMSE. A model with a low MSE and RMSE score indicates a good model.

While creating the model, Autopilot automatically splits the input data into train and validation sets and selects the model with the best objective metric, which is deployed in the Amazon Redshift cluster. We use the show model statement to view various metrics, including the calculated MSE by SageMaker while creating the model:

The following output shows an MSE of 1960.272217.

| Key | Value |

| Model Name | predict_rental_count |

| Schema Name | Public |

| Owner | Awsuser |

| Creation Time | Mon, 15.02.2021 16:13:18 |

| Model State | READY |

| validation:mse | 1960.272217 |

| Estimated Cost | 4.215379 |

| TRAINING DATA: | |

| Query | SELECT TRIP_COUNT, TRIP_HOUR, TRIP_DAY, TRIP_MONTH, TRIP_YEAR, TRIP_QUARTER, TRIP_MONTH_WEEK, TRIP_WEEK_DAY, TEMP_C, PRECIP_AMOUNT_MM, IS_HOLIDAY |

| FROM TRAINING_DATA | |

| Target Column | TRIP_COUNT |

| PARAMETERS: | |

| Model Type | Auto |

| Problem Type | Regression |

| Objective | MSE |

| Function Name | predict_rental_count |

| Function Parameters | trip_hour trip_day trip_month trip_year trip_quarter trip_month_week trip_week_day temp_c precip_amount_mm is_holiday |

| Function Parameter Types | int4 int4 int4 int4 int4 int4 int4 numeric numeric int4 |

| IAM Role | arn:aws:iam::xxxxxxxxxxxx:role/redshift-sagemaker-role |

| s3 Bucket | redshift-xxxxxxxxx-bucket |

| Max Runtime | 5400 |

Let’s run inference queries against our validation data using the following SQL query:

The following table summarizes our output.

| trip_time | actual_count | predicted_count | difference |

| 2017-09-29 20:00 | 185 | 246 | -61 |

| 2017-01-02 17:00 | 76 | 75 | 1 |

| 2018-12-24 18:00 | 70 | 111 | -41 |

| 2018-02-02 14:00 | 70 | 64 | 6 |

| 2018-07-08 22:00 | 273 | 299 | -26 |

We can also calculate the MSE and RMSE metrics based on our validation data:

We get the following output.

| MSE | RMSE |

| 1692.59 | 41.14 |

The RMSE value indicates that our model is accurate enough to the actual values from our validation dataset.

Troubleshooting

Although the Create Model statement in Amazon Redshift automatically takes care of initiating Autopilot process to build, train, and tune the best ML model and deploy that model in Amazon Redshift, you can also view the intermediate steps performed in this process, which may help you with troubleshooting if something goes wrong.

Amazon S3 bucket

When you create the model, you need to enter an Amazon Simple Storage Service (Amazon S3) bucket name as the value for the parameter s3_bucket. This bucket is used to share training data and artifacts between Amazon Redshift and SageMaker. Amazon Redshift creates a subfolder in this bucket prior to unloading the training data. When training is complete, it deletes this subfolder and its contents unless you set the parameter s3_garbage_collect to off, which may be used for troubleshooting purposes. For more information, see CREATE MODEL.

SageMaker console

If you navigate to the SageMaker console, you can view the training jobs and hyperparameter tuning jobs initiated by the Create Model process. You need appropriate access permissions, like the AmazonSageMakerReadOnly policy, to view these details. For more information, see AWS Managed (Predefined) Policies for Amazon SageMaker.

In the hyperparameter tuning jobs section on the SageMaker console, you can view the best training job selected by SageMaker based on the MSE metrics, along with different hyperparameter values. The following screenshot shows that our best training job had an MSE value of 1960.272217, similar to what we saw in the Amazon Redshift show model statement.

Amazon SageMaker Studio

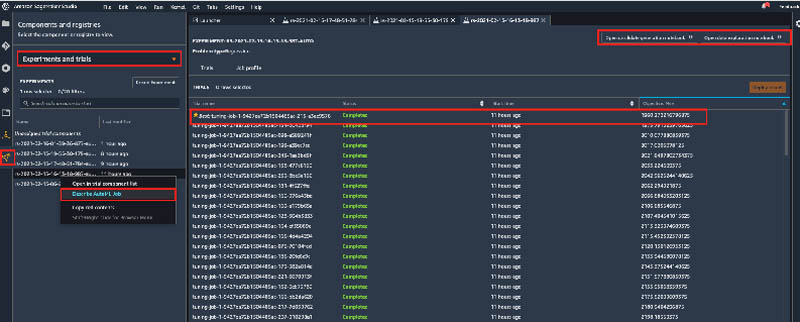

Amazon SageMaker Studio is a web-based integrated development environment (IDE) for ML. You can view your model training activities on the Experiments and trials page. You can also view job metrics by choosing the option Describe AutoML Job, as shown in the following screenshot.

Studio also allows you to view two useful notebooks:

- Data exploration notebook – Describes what Autopilot learned about the input data from Amazon Redshift

- Candidate generation notebook – Shows how Autopilot used this information about the data to generate candidates

For more information, see Amazon SageMaker Autopilot notebooks generated to manage AutoML tasks.

Conclusion

Amazon Redshift ML makes it easy for users of all skill levels to use ML technology. With no prior ML knowledge, you can use Amazon Redshift ML to gain business insights for your data. You provide the data that you want to train a model. Then Amazon Redshift ML creates a model that capture patterns in the input data. You can then use that model to make predictions using familiar SQL commands. To learn more about Amazon RedShift ML, visit the webpage.

About the Authors

Manash Deb is a Senior Analytics Specialist Solutions Architect at AWS. He has worked on building end-to-end data driven solutions in different database and data warehousing technologies for over fifteen years. He loves to learn new technologies and solving, automating and simplifying customer problems with easy-to-use cloud data solutions on AWS.

Manash Deb is a Senior Analytics Specialist Solutions Architect at AWS. He has worked on building end-to-end data driven solutions in different database and data warehousing technologies for over fifteen years. He loves to learn new technologies and solving, automating and simplifying customer problems with easy-to-use cloud data solutions on AWS.

Debu Panda, a principal product manager at AWS, is an industry leader in analytics, application platform, and database technologies and has more than 25 years of experience in the IT world.

Debu Panda, a principal product manager at AWS, is an industry leader in analytics, application platform, and database technologies and has more than 25 years of experience in the IT world.

Gokul Soundararajan is a principal engineer at AWS and received a PhD from University of Toronto and has been working in the areas of storage, databases, and analytics.

Gokul Soundararajan is a principal engineer at AWS and received a PhD from University of Toronto and has been working in the areas of storage, databases, and analytics.

Piali Das is a Senior Software Engineer in the Amazon SageMaker Autopilot team. She previously contributed to building SageMaker Algorithms. She enjoys scientific programming in general and has developed an interest in machine learning and distributed systems.

Piali Das is a Senior Software Engineer in the Amazon SageMaker Autopilot team. She previously contributed to building SageMaker Algorithms. She enjoys scientific programming in general and has developed an interest in machine learning and distributed systems.