AWS Feed

Ibotta builds a self-service data lake with AWS Glue

This is a guest post co-written by Erik Franco at Ibotta.

Ibotta is a free cash back rewards and payments app that gives consumers real cash for everyday purchases when they shop and pay through the app. Ibotta provides thousands of ways for consumers to earn cash on their purchases by partnering with more than 1,500 brands and retailers.

At Ibotta, we process terabytes of data every day. Our vision is to allow for these datasets to be easily used by data scientists, decision-makers, machine learning engineers, and business intelligence analysts to provide business insights and continually improve the consumer and saver experience. This strategy of data democratization has proven to be a key pillar in the explosive growth Ibotta has experienced in recent years.

This growth has also led us to rethink and rebuild our internal technology stacks. For example, as our datasets began to double in size every year combined with complex, nested JSON data structures, it became apparent that our data warehouse was no longer meeting the needs of our analytics teams. To solve this, Ibotta adopted a data lake solution. The data lake proved to be a huge success because it was a scalable, cost-effective solution that continued to fulfill the mission of data democratization.

The rapid growth that was the impetus for the transition to a data lake has now also forced upstream engineers to transition away from the monolith architecture to a microservice architecture. We now use event-driven microservices to build fault-tolerant and scalable systems that can react to events as they occur. For example, we have a microservice in charge of payments. Whenever a payment occurs, the service emits a PaymentCompleted event. Other services may listen to these PaymentCompleted events to trigger other actions, such as sending a thank you email.

In this post, we share how Ibotta built a self-service data lake using AWS Glue. AWS Glue is a serverless data integration service that makes it easy to discover, prepare, and combine data for analytics, machine learning, and application development.

Challenge: Fitting flexible, semi-structured schemas into relational schemas

The move to an event-driven architecture, while highly valuable, presented several challenges. Our analytics teams use these events for use cases where low-latency access to real-time data is expected, such as fraud detection. These real-time systems have fostered a new area of growth for Ibotta and complement well with our existing batch-based data lake architecture. However, this change presented two challenges:

- Our events are semi-structured and deeply nested JSON objects that don’t translate well to relational schemas. Events are also flexible in nature. This flexibility allows our upstream engineering teams to make changes as needed and thereby allows Ibotta to move quickly in order to capitalize on market opportunities. Unfortunately, this flexibility makes it very difficult to keep schemas up to date.

- Adding to these challenges, in the last 3 years, our analytics and platform engineering teams have doubled in size. Our data processing team, however, has stayed the same size largely due to difficulty in hiring qualified data engineers who possess specialized skills in developing scalable pipelines and industry demand. This meant that our data processing team couldn’t keep up with the requests from our analytics teams to onboard new data sources.

Solution: A self-service data lake

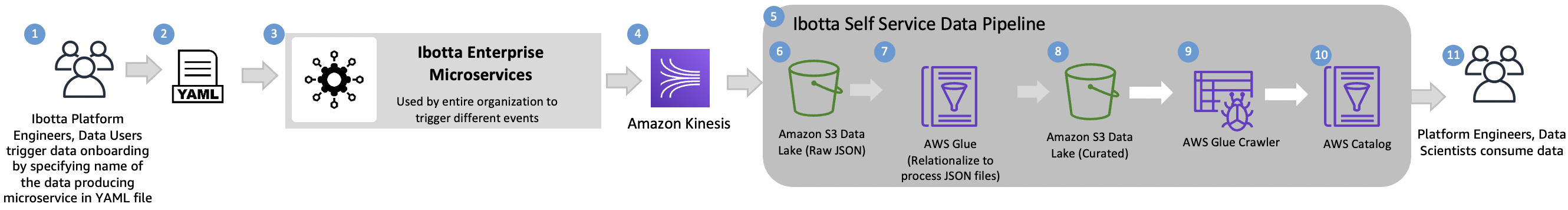

To solve these issues, we decided that it wasn’t enough for the data lake to provide self-service data consumption features. We also needed self-service data pipelines. These would provide both the platform engineering and analytics teams with a path to make their data available within the data lake and with minimal to no data engineering intervention necessary. The following diagram illustrates our self-service data ingestion pipeline.

The pipeline includes the following components:

- Ibotta data stakeholders – Our internal data stakeholders wanted the capability to automatically onboard datasets. This user base includes platform engineers, data scientists, and business analysts.

- Configuration file – Our data stakeholders update a YAML file with specific details on what dataset they need to onboard. Sources for these datasets include our enterprise microservices.

- Ibotta enterprise microservices – Microservices make up the bulk of our Ibotta platform. Many of these microservices utilize events to asynchronously communicate important information. These events are also valuable for deriving analytics insights.

- Amazon Kinesis – After the configuration file is updated, data is immediately streamed to Amazon Kinesis. Amazon Kinesis makes it easy to collect, process, and analyze real-time, streaming data so you can get timely insights and react quickly to new information. Streaming the data through Kinesis Data Streams and Kinesis Data Firehose gives us the flexibility to analyze the data in real time while also allowing us to store the data in Amazon Simple Storage Service (Amazon S3).

- Ibotta self-service data pipeline – This is the starting point of our data processing. We use Apache Airflow to orchestrate our pipelines once every hour.

- Amazon S3 raw data – Our data lands in Amazon S3 without any transformation. The complex nature of the JSON is retained for future processing or validation.

- AWS Glue – Our goal now is to take the complex nested JSON and create a simpler structure. AWS Glue provides a set of built-in transforms that we use to process this data. One of the transforms is Relationalize—an AWS Glue transform that takes semi-structured data and transforms it into a format that can be more easily analyzed by engines like Presto. This feature means that our analytics teams can continue to use the analytics engines they’re comfortable with and thereby lessen the impact of transitioning from relational data sources to semi-structured event data sources. The Relationalize function can flatten nested structures and create multiple dynamic frames. We use 80 lines of code to convert any JSON-based microservice message to a consumable table. We have provided this code base here as a reference and not for reuse.

- Amazon S3 curated – We then store the relationalized structures as Parquet format in Amazon S3.

- AWS Glue crawler – AWS Glue crawlers allow us to automatically discover schema and catalogs in the AWS Glue Data Catalog. This feature is a core component of our self-service data pipelines because it removes the requirement of having a data engineer manually create or update the schemas. Previously, if a change needed to occur, it flowed through a communication path that included platform engineers, data engineers, and analytics. AWS Glue crawlers effectively remove the data engineers from this communication path. This means new datasets or changes to datasets are made available quickly within the data lake. It also frees up our data engineers to continue working on improvements to our self-service data pipelines and other data paved roadmap features.

- AWS Glue Data Catalog – A common problem in growing data lakes is that the datasets can become harder and harder to work with. A common reason for this is a lack of discoverability of data within the data lake as well as a lack of clear understanding of what the datasets are conveying. The AWS Glue Catalog is a feature that works in conjunction with AWS Glue crawlers to provide data lake users with searchable metadata for different data lake datasets. As AWS Glue crawlers discover new datasets or updates, they’re recorded into the Data Catalog. You can then add descriptions at the table or fields levels for these datasets. This cuts down on the level of tribal knowledge that exists between various data lake consumers and makes it easy for these users to self-serve from the data lake.

- End-user data consumption – The end-users are the same as our internal stakeholders called out in Step 1.

Benefits

The AWS Glue capabilities we described make it a core component of building our self-service data pipelines. When we initially adopted AWS Glue, we saw a three-fold decrease in our OPEX costs as compared to our previous data pipelines. This was further enhanced when AWS Glue moved to per-second billing. To date, AWS Glue has allowed us to realize a five-fold decrease in OPEX costs. Also, AWS Glue requires little to no manual intervention to ingest and process our over 200 complex JSON objects. This allows Ibotta to utilize AWS Glue each day as a key component in providing actionable data to the organization’s growing analytics and platform engineering teams.

We took away the following learnings in building self-service data platforms:

- Define schema contracts when possible – When possible, ask your teams to predefine schemas (contracts) in a framework such as Protocol Buffers or Apache Avro. This helps ensure that data types remain consistent, thereby removing manual interventions. As an added bonus, both Protocol Buffers and Apache Avro provide a schema evolution that’s compatible with the schema evolution done in the data lake.

- Keep source schemas simple – Avoid complex types as much as possible. Types such as arrays, especially nested arrays, complicate the relationalize process, thereby making self-service pipeline creation complex.

- Define infrastructure and standards early on – Infrastructure standards can further help automate self-service pipelines by providing expected behaviors that you can automate around. For example, we’ve defined naming conventions for our SNS and Kinesis topics. If an event is called “PaymentCompleted” then we should have a corresponding topic named “payment-completed-events”. This way, we can always deduce by just the event name what the topic will be called which helps with automation.

- Serverless – We prefer serverless technologies because it removes any server management, which reduces operational burden even in cloud environments.

Conclusion and next steps

With the self-service data lake we have established, our business teams are realizing the benefits of speed and agility. As next steps, we’re going to improve our self-service pipeline with the following features:

- AWS Glue streaming – Use AWS Glue streaming for real-time relationalization. With AWS Glue streaming, we can simplify our self-service pipelines by potentially getting rid of our orchestration layer while also getting data into the data lake sooner.

- Support for ACID transactions – Implement data formats in the data lake that allow for ACID transactions. A benefit of this ACID layer is the ability to merge streaming data into data lake datasets.

- Simplify data transport layers – Unify the data transport layers between the upstream platform engineering domains and the data domain. From the time we first implemented an event-driven architecture at Ibotta to today, AWS has offered new services such as Amazon EventBridge and Amazon Managed Streaming for Apache Kafka (Amazon MSK) that have the potential to simplify certain facets of our self-service and data pipelines.

We hope that this blog post will inspire your organization to build a self-service data lake using serverless technologies to accelerate your business goals.

About the Authors

Erik Franco is a Data Architect at Ibotta and is leading Ibotta’s implementation of its next-generation data platform. Erik enjoys fishing and is an avid hiker. You can often find him hiking one of the many trails in Colorado with his lovely wife Marlene and wonderful dog Sammy.

Shiv Narayanan is Global Business Development Manager for Data Lakes and Analytics solutions at AWS. He works with AWS customers across the globe to strategize, build, develop and deploy modern data platforms. Shiv loves music, travel, food and trying out new tech.

Matt Williams is a Senior Technical Account Manager for AWS Enterprise Support. He is passionate about guiding customers on their cloud journey and building innovative solutions for complex problems. In his spare time, Matt enjoys experimenting with technology, all things outdoors, and visiting new places.