Amazon Web Services Feed

Software Patch Management Using AWS Serverless CICD

Cloud computing adoption has been rapidly increasing with enterprises around the globe opting for various migration patterns during their Cloud journey. Taking monolithic legacy applications as-is and moving them to the cloud is an approach also known as “lift-and-shift” and is one of the main drivers for Cloud migration. As customers become more knowledgeable about migration patterns, the lift-and-shift method should be optimized to take full advantage of Cloud Native tools .

If proper deployment processes and release planning are not in place, code migrations can be challenging and expensive. Most organizations are adopting agile methods for application development and are actively pursuing automation and DevOps practices. CI/CD (Continuous Integration and Continuous Deployment) has become a key component of the Software Development Lifecycle and is the backbone of DevOps.

The need for application patching and deployments in monolith and legacy environments are still a reality for multiple enterprises. Releasing new features into production is challenging due to application complexity, external dependencies and the coordination of multiple teams in a chain of procedures. This blog post aims to help application and operations teams set up a Serverless Software Patch Management pipeline.

Overview

Applications require continuous updates to address security vulnerabilities and fix software bugs. A poorly designed patch strategy can lead to failures to comply with SLAs and business continuity policies. Additionally, software updates can introduce bugs, performance issues and failures. The patch management lifecycle is integral to an organization’s service management strategy as it provides prescriptive guidance around how patches are applied and when. One way to prevent production outages is to test patches regularly in lower environments and only promote approved patches to the higher stack. This post walks you through setting up a Serverless Software Patch Management pipeline.

This solution was developed o introduce the customer to general DevOps concepts and demonstrates the power of DevOps processes and methodology in software development lifecycle. This solution was built as Infrastructure as a Code using Terraform.

Prerequisites

You need an AWS account with permissions to create new resources. For more information, see How do I create and activate a new Amazon Web Services account?

This blog post assumes you already have some familiarity with Terraform. We recommend that you review the HashiCorp documentation for getting started to understand the basics of the platform.

All the code used for this Blog Post is available in a Github repository for you to learn, modify and apply your own customizations.

What you build

The following AWS services are used to build this solution:

CodePipeline continuous delivery service that enables you to model, visualize, and automate the steps required to release your software.

S3 Bucket – CodePipeline creates an Amazon S3 bucket in the same region as the pipeline to store items for all pipelines in that region associated with your account

AWS CodeBuild – Fully managed build service that compiles your source code, runs unit tests, and produces artifacts that are ready to deploy

AWS Systems Manager – AWS Systems Manager Run Command lets you remotely and securely manage the configuration of your managed instances

Amazon Simple Notification Service (SNS) – Web service that enables applications, end-users, and devices to instantly send and receive notifications from the cloud.

AWS Lambda – Compute service that lets you run code without provisioning or managing servers. AWS Lambda executes your code only when needed and scales automatically, from a few requests per day to thousands per second

AWS Identity and Access Management (IAM) – Enables you to manage access to AWS services and resources securely

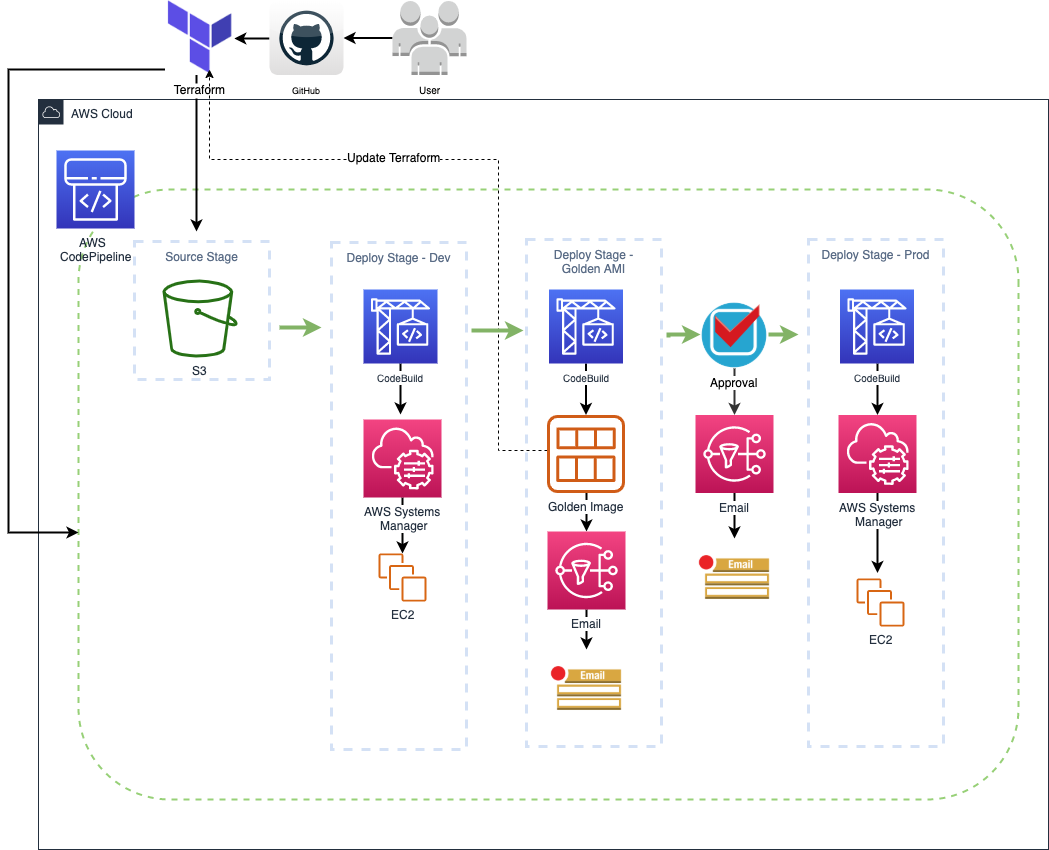

Architecture:

The patch deployment is a multi-step process and that requires interaction with several AWS services. Initially the user uploads the patch file to a patch source repository. Here a S3 bucket is used but this solution can work with other repos such as git hub etc.

AWS CodePipeline is configured to poll the S3 bucket periodically as its primary source and as soon as patch is uploaded, the Pipeline triggers. Before moving forward to patch deployment a manual approval email is sent by AWS CodePipeline utilizing SNS topic. After manual approval is granted AWS CodeBuild project is triggered to start deployment process. AWS CodeBuild runs a custom python code that identifies the targets based on tag parameters passed through environment variable of AWS CodeBuild.

Once targets are identified using tags, a command document is send to AWS Systems manager to run a remote command on targets. AWS Systems manager sends a remote command to start patching on EC2 instance sequentially and waits for a result before processing next command.

In case of a failure, SSM sends back a negative response to AWS CodeBuild and it eventually stops the Pipeline. Once the patch is deployed and all commands ran successfully, AWS CodeBuild issues an API call to create an image from running EC2 server and marks it as a Golden image. Once the image is created successfully AWS Systems manager parameter store is updated with the new AMI ID for future reference. A notification is sent via SNS topic once a new Golden Image becomes available.

Benefits

This solution provides a pathway to implement DevOps practices on monolith and legacy applications. There are several benefits observed by using this solution:

- Rapid and Continuous Delivery: This solution enable customers with faster code and patching delivery model. Effective usage of this solution has reduced the end-to-end deployment time from 7 days to 1 hour.

- Infrastructure as a Code: This solution is built using Terraform and follows standard software delivery lifecycle process. IaaC allows this solution to be replicated in other regions or to be used for similar projects.

- Serverless: A very minimal storage and compute overhead needed as whole solution is built using AWS serverless technology.

- Disaster Recovery: This solution provides an effective disaster recovery mechanism by copying the golden images to a different region. In case of any outage the IaaC solution will take minimal time to bring up another site in a different region.

Summary

Cloud enablement is a paradigm shift that is revolutionizing industries around the globe. Enterprises are migrating to cloud to leverage its high availability and scalability. DevOps principals play an important role in successful cloud migration journey but it is challenging to apply DevOps practices around monolithic and legacy applications because of their rigid architecture. This blog post mainly focuses on how to solution a serverless patch management solution applying DevOps CI/CD practices.

About the authors

Franco Bontorin is a Senior DevOps Consultant that have been assisting customers in Canada and in the US to adopt Containers and DevOps best practices for cloud and on-premises environments.

Prajjaval Gupta is a DevOps Consultant and has over 5 years of experience working in DevOps field. Prajjaval has been assisting Enterprise customers to adopt DevOps culture during their migration to the AWS Cloud.