Amazon Web Services Feed

Managing backend requests and frontend notifications in serverless web apps

Web and mobile applications usually interact with a backend service, often via an API. Many front-end applications pass requests for processing, wait for a result, and then display this to the user. This synchronous approach is only one way to handle messages, but modern applications have alternatives to provide a better user experience.

There are three common ways to make and manage requests from your frontend. This blog post explains the benefits and use-cases of each approach. This post references the Ask Around Me example application, which allows users to ask and answer questions in their local geographic area in real time. To learn more, refer to part 1 of the blog application series.

The synchronous model

The synchronous call is the most common API request pattern, where the caller makes a request to an API and then waits for the response:

This type of request is easy to implement and understand because it mirrors the functional call-response pattern many developers are familiar with. The requestor is blocked until the calls completes, so it is well suited to simple requests with short execution times. Use cases include: retrieving the contents of a shopping cart, looking up a value in a database, or submitting an email address from a web form.

However, when your API interacts with other services or external workflows, the synchronous model can have limitations. In the following ecommerce example, any slow performance in the downstream services delays the entire roundtrip performance. Additionally, any outages in one of those services may result in an apparent failure of the entire service.

For services with lengthy workflows, you may reach API Gateway’s 29-second integration timeout. In the following ride sharing example, the service responsible for finding available drivers may have a highly variable response time. This request may time out. It also provides a poor user experience, as there is no feedback to the user for a considerable period.

Synchronous requests also have others limitations. You cannot receive more than one response per request, nor can you subscribe to future changes in data. In this example, the API request can only inform callers about drivers at the end of the length process request.

The asynchronous model

Asynchronous tasks are common in serverless applications and distributed applications. They allow separate parts of an application to communicate without needing to wait for a synchronous response. Asynchronous workloads often use queues between services to help manage throughout and assist with retry logic.

With asynchronous tasks, the caller hands off the event and continues on to the next task after receiving the acknowledgment response. The caller does not wait for the entire task to complete. The downstream service works on this event while the caller continues servicing other requests. The ecommerce example, converted to an asynchronous flow, looks like this:

In this example, a caller submits an order and receives a response from API Gateway almost immediately. With service integrations, API Gateway then stores the request directly in a durable store such as Amazon SQS or DynamoDB, before any processing has occurred. This results in a relatively consistent caller response time, regardless of downstream service processing time.

The downstream services fetch messages from the SQS queue or the DynamoDB table for processing. If there is a downstream outage, messages are persisted in the queue and may be retried later. From the user’s perspective, the request has been successfully submitted.

The Ask Around Me application handles the publishing of both questions and answers asynchronously. The API passes the user data to a Lambda function that stores the message in an SQS queue. SQS responds immediately to indicate that the message has been stored successfully, ending the API response. Another Lambda function then takes the messages from the SQS queue and processes these independently.

Both synchronous and asynchronous requests are useful for different functions in web applications, so it can be helpful to compare their features and behaviors:

| Synchronous requests | Asynchronous requests |

| The caller waits until the end of processing for a response. | The caller receives an acknowledgment quickly while processing continues. |

| Waiting may incur cost. | Minimizes the cost of waiting. |

| Downstream slowness or outages affects the overall request. | Queuing separates ingestion of the request from the processing of the request. |

| Passes payloads between steps. | More often passes transaction identifiers. |

| Failure affects entire request. | Failure only affects segment of request. |

| Easy to implement. | Moderate complexity in implementation |

Handling response values and state for asynchronous requests

With asynchronous processes, you cannot pass a return value back to the caller in the same way as you can for synchronous processes. Beyond the initial acknowledgment that the request has been received, there is no return path to provide further information. There are a couple of options available to web and mobile developers to track the state of inflight requests:

- Polling: the initial request returns a tracking identifier. You create a second API endpoint for the frontend to check the status of the request, referencing the tracking ID. Use DynamoDB or another data store to track the state of the request.

- WebSocket: this is a bidirectional connection between the frontend client and the backend service. It allows you to send additional information after the initial request is completed. Your backend services can continue to send data back to the client by using a WebSocket connection.

Polling is a simple mechanism to implement for many systems but can result in many empty calls. There is also a delay between data availability and the client being notified. WebSockets provide notifications that are closer to real time and reduce the number of messages between the client and backend system. However, implementing WebSockets is often more complex.

Using AWS IoT Core for real-time messaging

In both the synchronous and asynchronous models, it’s assumed that the caller makes a request and is only interested in the final result of that request. This doesn’t allow for partial information, such as the percentage of a task complete, or being notified continuously as data changes.

Modern web applications commonly use the publish-subscribe pattern to receive notifications as data changes. From receiving alerts when new email arrives to providing dashboard analytics, this method allows for much richer streams of event from backend systems.

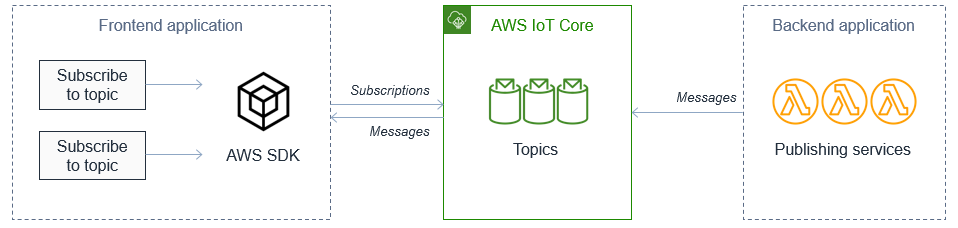

In Ask Around Me, the application uses this pattern when listening for new questions from the user’s local area. The frontend subscribes to the geohash value of the user’s location via the AWS SDK. It then waits for messages published by the backend to this topic.

The SDK automatically manages the WebSocket connection and also handles many common connectivity issues in web apps. The messages are categorized using topics, which are strings defining channels of messages.

The AWS IoT Core service manages broadcasts between backend publishers and frontend subscribers. This enables fan-out functionality, which occurs when multiple subscribers are listening to the same topic. You can broadcast messages to thousands of frontend devices using this mechanism. For web application integration, this is the preferable way to implement publish-subscribe than using Amazon SNS.

The IotData class in the AWS SDK returns a client that uses the MQTT protocol. Once the frontend application establishes the connection, it returns messages, errors, and the connection status via callbacks:

mqttClient.on('connect', function () { console.log('mqttClient connected') }) mqttClient.on('error', function (err) { console.log('mqttClient error: ', err) }) mqttClient.on('message', function (topic, payload) { const msg = JSON.parse(payload.toString()) console.log('IoT msg: ', topic, msg) })

For more details on how to implement MQTT WebSocket connectivity for your application, see the Ask Around Me sample application code.

Combining multiple approaches for your frontend application

Many frontend applications can combine these models depending on the request type. The Ask Around Me application uses multiple approaches in managing the state of user questions:

- When the application starts, it retrieves an initial set of questions from the synchronous API endpoint. This returns the available list of questions up to this point in time.

- Simultaneously, the frontend subscribes to the geohash topic via AWS IoT Core. Any new questions for this geohash location are sent from the backend processing service to the frontend via this topic. This allows the frontend to receive new questions without subsequent API calls.

- When a new question is posted, it is saved to the relevant SQS queue and acknowledged. The question is processed asynchronously by a backend process, which sends updates to the topic.

There are several benefits to combining synchronous, asynchronous, and real-time messaging approaches like this. Most importantly, the user experience remains consistent. The user receives immediate feedback to posting new questions and answers, while longer-running processes are managed asynchronously.

When new information becomes available, the frontend is notified in near-real time. This happens without needing to poll an API endpoint or have the user refresh the user interface. This also reduces the number of unnecessary API calls on the backend service, reducing the cost of running this application. Finally, this uses scalable managed services so the frontend application can support large numbers of users without impacting performance.

Conclusion

Web applications commonly use synchronous APIs when communicating with backend services. For longer-running processes, asynchronous workflows can offer an improved user experience and help manage scaling. By using durable message stores like SQS or DynamoDB, you can separate the request ingestion and response from the request processing.

In this post, I show how modern web applications use real-time messaging via WebSockets to improve the user experience. This provides a transport mechanism for pushing state updates from the backend to the frontend client. The AWS IoT Core service can fan out messages using topics, broadcasting messages to large numbers of frontend subscribers.

To see these three methods in an example frontend application, read more about the Ask Around Me example application.